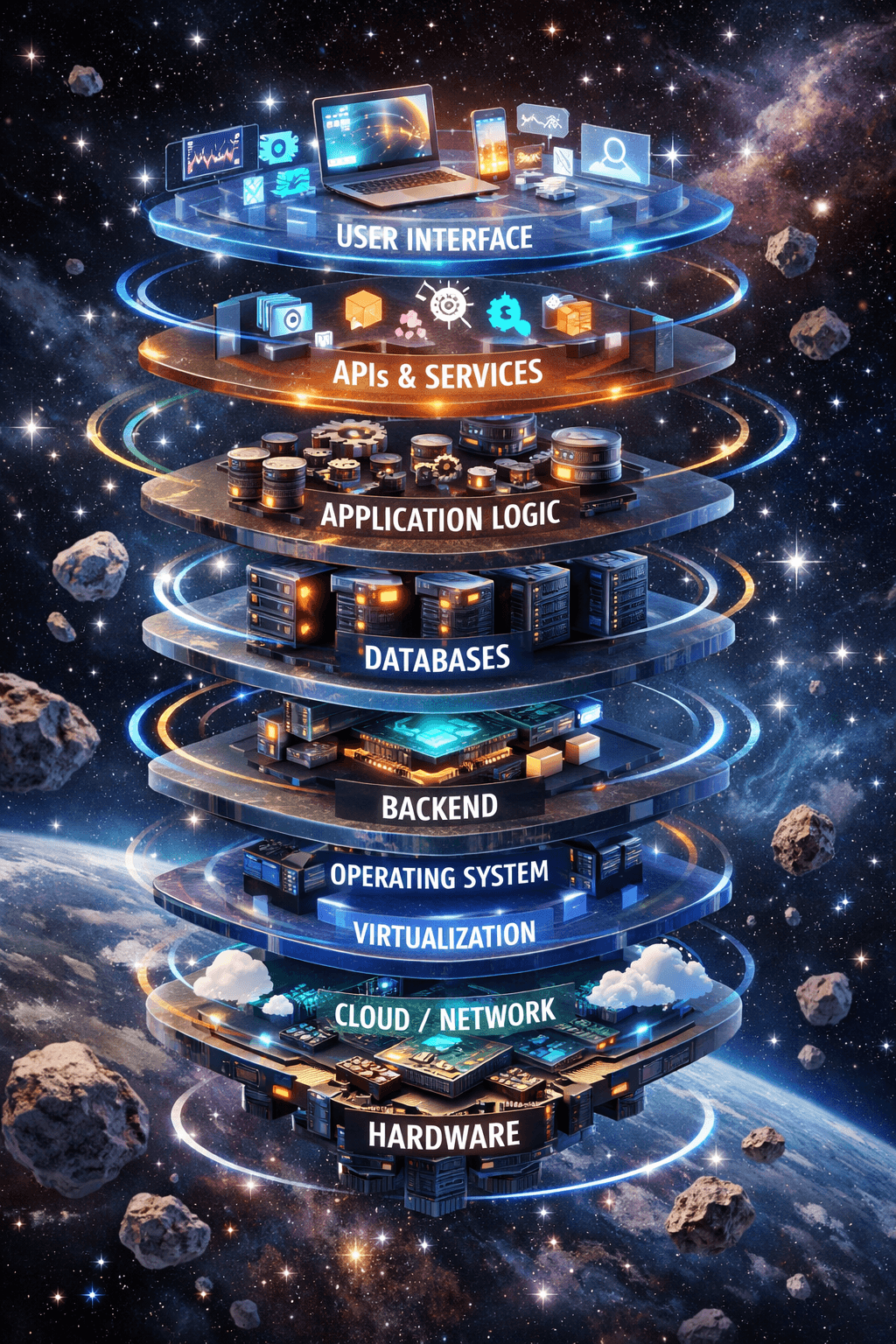

The AI Engineering Stack Powering Our Ventures

At Donkey Ideas, building ventures that leverage artificial intelligence requires more than just a great idea—it demands a robust, scalable, and efficient engineering foundation. Over years of developing AI-powered products, we have refined a core stack that balances cutting-edge capability with production stability. This stack isn't just a collection of tools; it's a strategic framework that accelerates our venture building methodology from prototype to scale. In this post, we'll pull back the curtain on the essential layers of our AI engineering stack that we deploy for every new venture.

The Foundational Layer: Cloud Infrastructure & Orchestration

Every modern AI application begins with a solid cloud foundation. We primarily build on platforms like AWS and Google Cloud Platform, which offer the elastic compute, specialized hardware (like GPUs and TPUs), and managed services necessary for AI workloads. For container orchestration, Kubernetes has become non-negotiable. It allows us to package, deploy, and manage microservices—including model training pipelines and inference endpoints—with high availability and efficient resource utilization. This infrastructure-as-code approach, often using Terraform, ensures that our ventures are reproducible, scalable, and cost-optimized from day one.

Data Management & Feature Stores

High-quality data is the fuel for AI. Our stack emphasizes tools for robust data ingestion, transformation, and management. We leverage Apache Airflow or Prefect for orchestrating complex data pipelines. For managing the features used to train models—a critical component for consistency between training and serving—we implement feature stores. Solutions like Feast or Tecton help us avoid the common training-serving skew problem, a pitfall highlighted in many Google research papers on ML systems. This ensures our models perform reliably in production.

The Model Development Core

This is where the algorithmic magic happens. Our default language is Python, and we rely heavily on frameworks like PyTorch and TensorFlow for deep learning. For rapid prototyping and classical machine learning, Scikit-learn remains indispensable. However, writing model code is only part of the story. We use MLflow or Weights & Biases to meticulously track experiments, log parameters, and version models. This turns the often-chaotic process of model development into a reproducible, collaborative science. It’s a discipline that pays dividends when iterating on our portfolio ventures.

From Training to Deployment: The MLOps Bridge

The largest gap in AI projects is moving from a working notebook to a live, updating service. Our stack bridges this with a strong MLOps (Machine Learning Operations) layer. We use tools like Kubeflow or MLflow Projects to automate the retraining of models on new data. For serving models at scale, we prefer high-performance inference servers like TorchServe, TensorFlow Serving, or cloud-native options like SageMaker Endpoints. This entire pipeline is monitored for data drift, model performance decay, and infrastructure health, allowing for proactive maintenance.

Application Integration & APIs

The best model is useless if it's not seamlessly integrated into a user-facing application. We design AI capabilities as well-documented, versioned APIs (often using FastAPI or GraphQL) that can be easily consumed by front-end clients or other microservices. This service-oriented architecture, a cornerstone of our consulting and venture building services, ensures that the AI component is modular, updatable, and doesn't become a monolithic bottleneck. Security, rate limiting, and clear documentation are paramount at this layer.

Why This Stack Matters for Venture Success

Adopting a standardized stack is not about limiting innovation; it's about accelerating it. By reducing decisions around foundational technology, our teams can focus on what truly differentiates a venture: the problem being solved and the user experience. This stack provides the guardrails for building AI systems that are not just intelligent, but also reliable, scalable, and maintainable. As noted in the McKinsey State of AI 2023 report, companies that excel in AI best practices, including a robust tech foundation, capture significantly more value from their investments.

Building with this stack from the outset de-risks the technical journey and sets ventures on a path to sustainable growth. It allows us to fail fast, learn quickly, and scale with confidence. If you're embarking on building an AI-powered product and want to leverage a battle-tested approach, explore our contact page to start a conversation about how we can build it together.

Donkey Ideas is a creative consulting studio that helps entrepreneurs and businesses turn bold ideas into reality. We share insights on business strategy, financial modeling, and project management — and partner with clients to take ideas from concept to launch.